Marketing budgets are flat. Expectations are rising. And the most experienced CMOs in the room are struggling to make the case for more resources. The problem isn't performance. It's proof.

Every CMO who has walked into a quarterly budget review in the last year knows what the conversation feels like. The pipeline numbers are solid, the campaigns shipped on time all while the team is producing more content than they did a year ago. And yet the ask for additional headcount gets deferred, the new initiative gets shelved, and the budget comes back unchanged. The connection between the work and revenue is where the conversation stalls.

That connection is where the conversation breaks down. The CMO knows marketing contributed to the pipeline. The CFO needs to see exactly how, in a format that can be compared across quarters. And the campaign operation that produced the results wasn't built to generate that kind of data.

The Budget Conversation Has Changed

The budget conversation used to center on allocation. How much goes to demand generation. How much goes to brand. How much goes to content. That conversation still happens, but a harder one has moved in front of it.

The question the CFO and the board are asking now is more fundamental: can marketing demonstrate its contribution to revenue? The allocation conversation assumes marketing's value is established and the question is where to direct it. The new conversation questions whether the value can be demonstrated at all.

Most CMOs feel this shift before they can name it. Three signs it's already happening:

-

Budget requests require more supporting data than they used to.

-

The data you provide gets questioned in ways it didn't before.

-

Other functions (sales enablement, customer success, product marketing) start absorbing budget lines that used to belong to marketing.

Nobody announces that marketing is losing trust. The reallocation happens gradually, one deferred initiative at a time.

The irony is that marketing teams have more measurement infrastructure than ever. More dashboards, attribution tools, and campaign analytics. The volume of data increased, but the clarity didn't. CMOs walk into budget conversations with more slides and less certainty than five years ago, because the data is abundant and the story it tells is contradictory.

Why Measurement Broke

The measurement problem has a specific origin, and it's upstream of the dashboard. As teams added more tools, more channels, and more AI-assisted production, the campaign inputs became inconsistent. When every campaign is assembled differently, with different tools, different contributors, and different interpretations of the brief, comparing performance across campaigns becomes unreliable.

Think about how most marketing teams actually produce campaigns. Campaign A was built by two people using one AI tool, with the conversion path set up by a demand gen specialist who left three months later. Campaign B was built by three different people using two tools, with the conversion path configured by someone who inherited the work and made reasonable adjustments. Campaign C was partially outsourced. Each campaign produced results. The results can't be meaningfully compared because the inputs were different every time.

Attribution models require consistent inputs. When the inputs vary every cycle, the model produces numbers that nobody fully trusts. Marketing says the campaign drove pipeline. Sales says the leads weren't qualified. Finance says the cost-per-acquisition doesn't reconcile with the revenue attribution. Everyone is working from the same data and reaching different conclusions, because the data was generated by processes that changed every quarter.

The CMO presents pipeline numbers. The CFO asks what drove them. The CMO points to campaigns. The CFO asks which campaigns, and which parts of those campaigns, and the answer gets fuzzy because the measurement infrastructure wasn't designed for how the team produces work.

This is the conversation that keeps ending the same way. The work is real. The results are real. The proof is structurally incapable of being as precise as the CFO needs it to be, because the system that produced the results was built for production, one campaign at a time, with whatever tools and people were available that week. It was never built for measurement.

The Compound Effect on Budget Authority

Every quarter Marketing Leadership can't demonstrate impact cleanly, budget authority erodes. The erosion is gradual enough that it doesn't register as a crisis. First, new initiatives require more justification than they used to. Then headcount requests get deferred to the next planning cycle. Then a budget line gets quietly reallocated to a function that can demonstrate ROI more directly.

The compounding works in both directions. Marketing Leaders who demonstrates clear, comparable impact in Q2 enters the Q3 conversation with credibility. Each quarter of clean data builds on the last. But a CMO who presents ambiguous data in Q2 enters Q3 with a deficit. After two or three quarters of unclear results, the conversation can shift from "how should we allocate the marketing budget" to "should the marketing budget grow at all." The authority in the room diminishes with each cycle the proof stays blurry.

Meanwhile, the team absorbs the pressure. Campaigns still need to ship, but the pipeline targets didn't change, nor did the headcount increase. The "do more with less" mandate that every marketing team lives with isn't arbitrary. It's the downstream consequence of a measurement problem that has compounded over multiple budget cycles. The team is being held accountable for results they can demonstrate operationally but can't prove financially.

The hardest part for Marketers is that they can feel the gap between the work and the proof, but the tools they've been given to close it (better dashboards, more attribution models, additional analytics platforms) all operate on top of the same inconsistent data. Adding a better reporting layer on top of fragmented campaign inputs doesn't fix the problem. It visualizes it more clearly.

What Would Change the Conversation

The Marketing Leaders who walks into the budget conversation with consistent campaign data has a fundamentally different experience. Same room, same CFO, but different outcome. The difference is in the inputs.

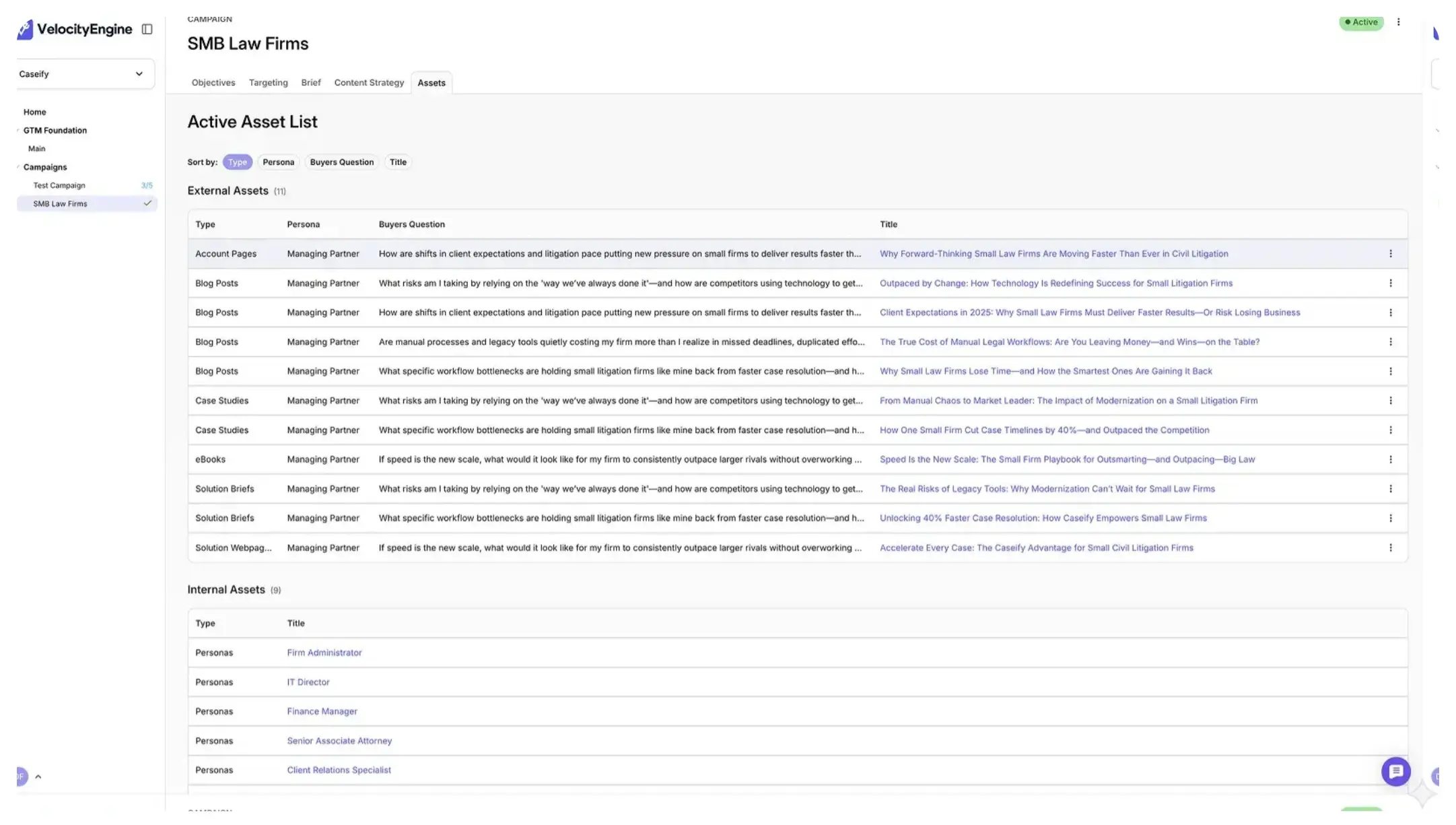

Consistent data means every campaign ran through the same structure: defined segments, defined conversion paths, defined measurement points. The performance data from Campaign A can be compared to Campaign B because both campaigns used the same system. The trends are meaningful because the baselines are comparable. The attribution model works because it's measuring apples against apples for the first time.

This doesn't require a new analytics platform or a data warehouse rebuild. It requires a consistent campaign production system that generates comparable data by design. When the segments are defined once and carry forward, when the conversion paths are specified in the campaign structure rather than improvised during production, and when the measurement points are embedded in the system rather than configured differently every cycle, the data that comes out is usable.

The budget conversation changes because the proof changes. A CMO who can say "Campaign A generated this pipeline at this cost with this conversion rate, Campaign B used the same structure and here are the results, and here's what we're adjusting for Campaign C based on what we learned" is making a case the CFO can evaluate. The data is comparable. The trends are visible. The story is credible because the system that produced the story was built for comparison.

The budget conversation CMOs keep losing has nothing to do with whether marketing is working. It has everything to do with whether the campaign operation produces the kind of data that proves it. The cause is upstream, in the system that produces the campaigns and the data that comes back from them. Fix the system, and the proof follows.