The pitch is compelling. Train AI agents on your content. Build workflows that automate production. Connect tools that handle outreach, publishing, and follow-ups without manual intervention. McKinsey's latest State of AI research found that 88% of organizations now use AI in at least one business function compared to 78% a year earlier.

The momentum is real, and marketing teams are right in the middle of it. So the question is no longer whether to adopt AI agents. The question is what those agents are built on.

And for marketing teams, the answer is uncomfortable: agents trained on scattered documents produce scattered content faster. Agents without strategy context produce off-brand content faster. Humans automated the chaos.

What Agents Actually Inherit

AI agents are pattern machines, they do not have judgment (yet). They have inputs, and marketing teams are feeding them inputs that were already broken before AI entered the picture.

Think about what agents are actually pulling from: a messaging doc from early 2025 that nobody has updated. A brand voice guide that exists in three different versions across Google Drive, depending on the team. Positioning that gets interpreted differently by every person who touches a campaign. Customer proof points scattered across case studies, sales decks, and Slack threads.

These are the foundations AI agents are learning from, and agents do not question their inputs. They do not flag the messaging feels off or that two documents contradict each other. They take whatever they are given and produce more of it, faster. The quality of the output is a direct reflection of the quality of the system underneath.

And let's not talk about the variability in the human prompting of AI. Each person writes prompts differently, creating even more inconsistency.

Disarray, Only Faster

Before AI, a three-person marketing team might produce two campaigns per quarter. The campaigns were slow, but at least the team had time to maintain some strategic consistency through sheer proximity to the work. Everyone was close enough to the original intent that drift stayed manageable.

Now that same team can produce ten+ campaigns per quarter. But the underlying system has not changed. The messaging still lives in a static, latent document. The campaign briefs still get interpreted differently by each person. The handoff between strategy and execution still loses context every time. AI did not fix any of that, it just made the output volume high enough the cracks became visible.

IDC's FutureScape 2026 research reinforces this pattern. Their analysis warns that companies failing to establish high-quality, AI-ready foundations will face a 15% productivity loss as agentic systems scale. The issue is not the agent's capability, rather the quality of what the agent has to work with. When production is nearly free, the differentiator is not how fast teams can create, but whether what they create can compound.

What a System Looks Like (and What Most Teams Have Instead)

Marketing teams operate with what looks like a system but is actually a collection of disconnected inputs. There is messaging, a brand guide (or two), a content calendar, a project management tool, and several AI tools layered on top. Each component exists independently. None of them talk to each other in a way that preserves strategic context from one campaign to the next.

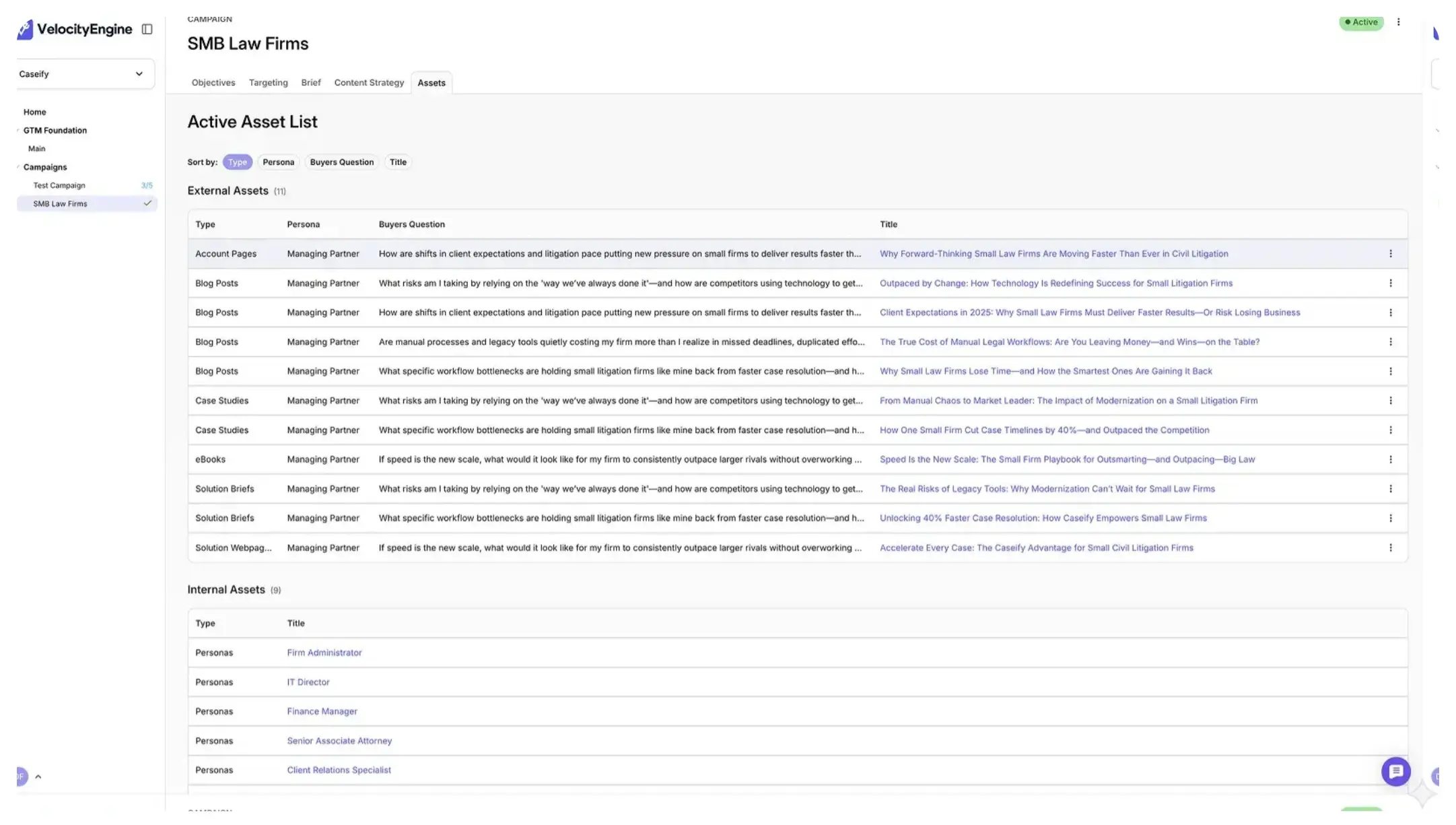

In an actual system, strategy is embedded into the workflow, not referenced from a separate document. Messaging, audience segments, proof points, and campaign architecture travel together so every touchpoint, whether built by a person or an agent, starts from the same foundation. When one campaign generates results, those learnings inform the next campaign automatically rather than getting lost in a post-mortem deck nobody reopens.

This is the distinction between AI that is governed and AI that is experimental. Governed AI operates within a structure that preserves brand, strategy, and context. Experimental AI operates in the open, producing output that may be individually impressive but collectively inconsistent. Same technology with completely different results. The variable is the system, not the LLM.

VelocityEngine was built around this principle. Rather than adding AI as a layer on top of existing tools, the platform treats campaign orchestration as the system itself, embedding strategy, messaging, and audience context directly into execution workflows. The result is that AI operates within a governed structure rather than in a vacuum. Teams like vLex have used this approach to launch campaigns in hours instead of months, with a three-person team operating with the output of five.

Fix the Foundation, Then Accelerate

The instinct right now is to move fast on AI adoption. Every conference, every analyst report, every vendor pitch is telling marketing teams to build agents, automate workflows, and scale production. That instinct is not wrong, but it skips a step.

The step is making sure what AI is built on is worth scaling. If your messaging is fragmented, agents will scale fragmented messaging. If your campaign architecture is disconnected, agents will produce disconnected campaigns faster. If strategic context gets lost during handoffs today, AI will not magically preserve it tomorrow.

McKinsey found that only 6% of organizations qualify as AI high performers, those seeing meaningful impact on earnings. They did not get there by adding more agents. They got there by building systems worth scaling.